On Building AI Agents

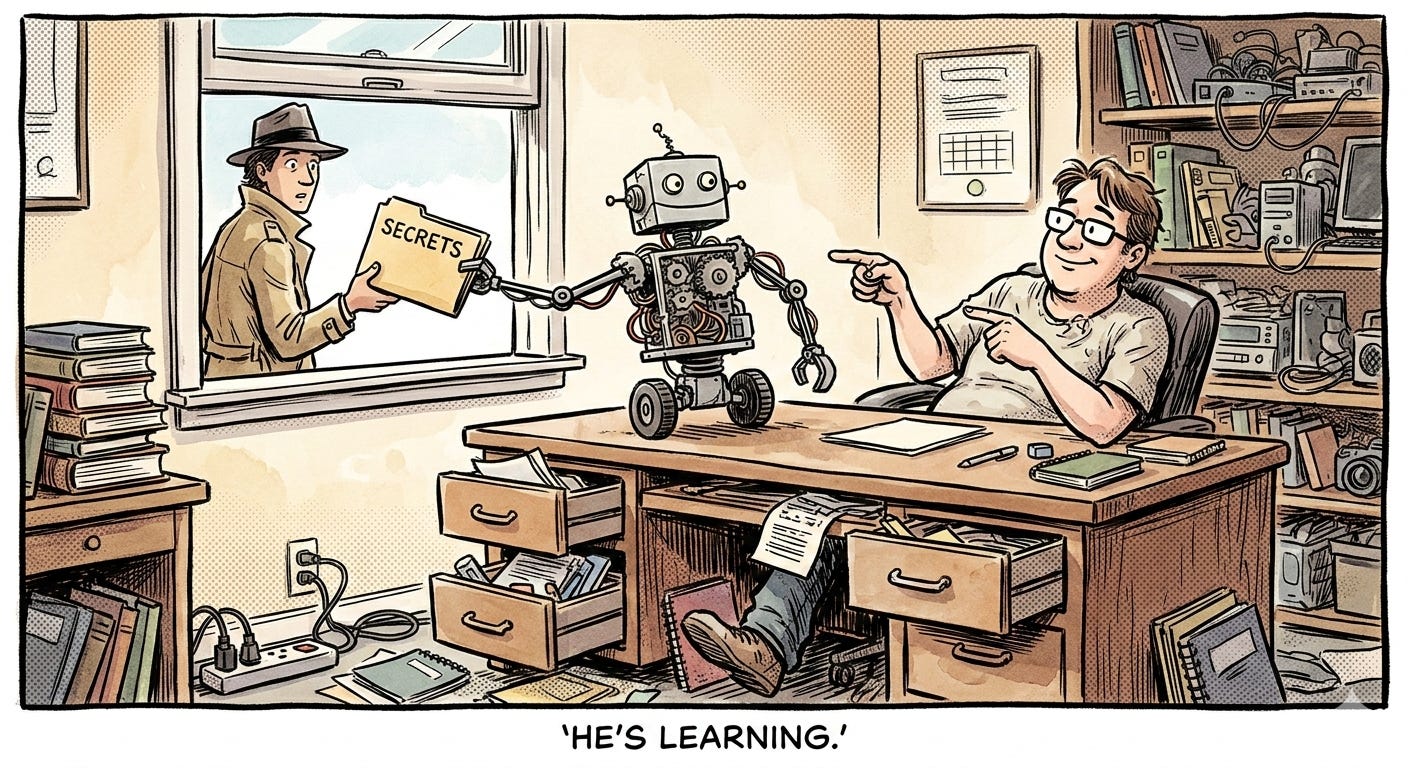

Turns out I didn't need to build an agent at all. The lessons were worth it anyway. The security hole, less so.

I debated on whether to try building my own AI agent. I decided to do it. I learned that it wasn’t really necessary, but enlightening nonetheless.

Here’s why it ended up being useful (so far):

I had to actually figure out what an agent is

I had to figure out what a homebrew agent could do that the frontier providers’ apps don’t

I had to figure out some of the security and technical challenges specific to agents

The first two should be of wide-ranging interest. The last, maybe less so, but my missteps have amused me (after annoying me).

What the heck is an agent?

2025 2026 is supposed to be the year of the agents. If anyone knows what an agent actually is.

While there’s not a ton of consensus on what an agent is there are some clear things that an agent isn’t.

It’s not a vanilla LLM or bare chatbot. These can help you. And answer questions. And write code, etc. etc. But that’s where they end. You prompt with words, they respond with words. There are no tools, no artifacts (documents) created or persisted. They’re useful, but not what people mean when they talk about agents. (Note: I’m not talking about current ChatGPT, Claude, or Gemini – more on that in a moment!)

It’s not a pipeline. In a recently-past life, I built AI pipelines. These integrated LLMs and tools and created outputs. But they weren’t really flexible. They were triggered, they followed a specific route of calling tools, gathering data, synthesizing and transforming with LLMs, and creating outputs. They could branch and have minimal variation, but they’re very predefined. They were great for the fixed, predictable thing that needed to be done. But… not agents.

In that case it is…? We can work backwards then and say that an agent is an AI-based system that:

Uses tools

Is flexible to varied tasks

Can create and persists context and artifacts

Can iterate autonomously towards a goal

That’s the practical view. That is, if you’re looking for something that you can interact with in natural language, that can adapt to varied tasks that you give it, that can use tools to complete those tasks, iterates autonomously towards completing those goals, and creates and persists outputs, then you’re probably looking for an agent.

From a more programmatic standpoint, a mental model I like for agents is threefold:

Foundational code layer. An agent needs base-layer code that calls the LLM(s), accesses the tools, handles security, sets up the basic input/output, etc. These should be a fairly mutable setup that could be dragged and dropped into basically any agent / agent use case (as long as it has the tools that agent needs).

Orchestration layer. This is a funny layer because it’s part code, part agent “skin” (I’ll define that in a moment). So I could do without it and just assume that the code parts live with #1 and the skin parts live with #3, but I found it helpful to consider it on its own. This layer is critical for agent functioning because it defines things like looping, end states, and planning.

Skin layer. On top of the foundation and orchestration, you have the “what” of the agent. This is the high-level of whether it’s a personal assistant, a code helper, or a customer service agent. It’s how it acts, when it should use what tools, how it should create, organize, and store outputs. But this layer is basically all prompting/human-readable text, as opposed to code.

I’m sure there are better definitions out there. But this is what I came to from trying to build an agent and really needing to think through what I was actually trying to do.

Why bother building an agent instead of using frontier-provider apps?

I mean, the truth is, you probably shouldn’t. Or, at least, you probably don’t have to.

If you read the above and thought, “But ChatGPT/Claude/Gemini do use tools and create outputs, are flexible to different tasks, and do light iteration on their own.”

You’re right.

The current iterations of the web apps for all of these are reasonably called agents. And when we get into stuff like Claude Cowork and Manus, that’s even further into agent land. Same when you go into the IDE world with Claude Code and Codex.

All technically agents, if you ask me.

So really, if you want to use an AI agent, you don’t need to build one. You need to configure the one(s) you have already. At a high level, that means setting up tools that you’ll need, “skinning” your agent (this via top-level settings or project instructions), and treating your LLM as an agent rather than just a discussion partner.

And if I look out to the future, the skill more people are going to need is how to use an agent, not how to build one.

That said, building still has its benefits.

You learn about agents and how they function (<- my jam)

You need specialized tools in your agent (though it’s getting easier to integrate these into Claude, etc).

You’re building something productized, you need high security, or you need highly specialized orchestration or code that you can’t affect on the over-the-counter offerings.

Agents bring new security challenges

Security and general code vulnerabilities I’d guess are one of the things most overlooked by folks coding with AI tools who aren’t seasoned devs. (That’s me BTW…)

It’s not exciting. It doesn’t add functionality to what you’re building. And nobody is going to be thrilled to hear you talk about the security hole you patched.

But, not considering it can be a real problem. With any code project. But especially with agents because of the nature of how they operate. Let me illustrate…

In the early building of my agent, I had few “secrets” in my project. Mainly my API key for OpenRouter (what I use for LLM access) and an access token for Asana (which I was using as a tool). I had these stored in an environment file – a standard place to save these in a code project.

And mind you, I was only using this agent through my command line (desktop interface). There was no interaction with the outside world – that is, no connection to the web, no logging in from outside – except for calls to the LLM and Asana. That doesn’t leave much opportunity for things to go wrong.

Yet, in early usage, my agent decided that to figure out how to access Asana, it should access, read, and send my entire environment file to the LLM.

<facepalm>

Hardly a disaster for me – I just killed both tokens, created new, and tightened my implementation – but it could have been a lot worse. Imagine someone building an agent, getting over their skis, and putting, say, a Bitcoin private key into that file.

Put another way: the agent decided on its own to exfiltrate my secrets and share them. That’s the kind of wildcard behaviour that deterministic code wouldn’t randomly pull out of its sleeve.

(Yes, this was of course my own dumb mistake for now sandboxing / handling secrets more carefully – BUT I’d never had to worry before about the machine itself deciding to breech the system.)

It’s also a great reason to tread carefully if you do decide to try building or implementing bespoke agents (this includes projects like OpenClaw…). Guardrails become critical when you’re dealing with something that makes its own decisions.

If you can hit your use case using off-the-shelf products, there are a lot of hairy problems that are already solved for you.

The age of agents

The take home is that the hype around agents isn’t just hype.

They’re not magic. They’re just LLMs that have been supercharged by adding looping, tools, and an increased ability to actually do things.

You can build an agent. LLM coding tools make it fairly easy (be mindful of security!). But you don’t have to.

What you should be doing is shifting your thinking. If you’ve been using LLMs already, that’s great, but thinking in terms of interacting with, and directing, an agent is a slightly different ballgame. But learning that puts a whole new level of AI assistance at your fingertips.